Cognition in the Land of Engineering (1/2)

A brief review of human-hacking

Men are not prisoners of fate, but only prisoners of their own minds.

– Franklin Roosevelt, Pan American Day address, April 15, 1939

Thanks for stopping by! This is the first in a series of posts on augmenting cognition, the messy constellation of all things brain-boosting. Read on for war games, creativity injection, and other quick peeks into AugCog’s recent history…

Estimated Read Time: 7 minutes

Set Dressing

What is the moment we, as a species, began to understand our own cognition? How far back should we go: to the 1970’s, with the first issue of Cognitive Science? The 1500’s, with Al-Zahrawi’s written treatments for neurological disorders? Or maybe the second century A.D., when Roman physician Galen attributed cognition to the brain? Various theories of mind predate even Galen, and some might be old enough to have escaped written record entirely. And yet, for as long as we’ve sought to understand our cognition (and perhaps even before then), we’ve also sought to improve it.

I’m relatively new to the formal study of cognition, but I’ve been interested in augmenting my own years before I knew what that meant.

Fueled by Flatland, I tried (and failed) to enlist my eighth-grade peers in a journey toward four-dimensional spatial awareness with Hinton’s cubes; in high-school I started a math team and tried to develop my problem-solving rigorously, mostly through texts (Polya, Zeitz) and their exercises.

It wasn’t until taking NC State’s ECE 505 (Brain-Computer Interfaces), concurrent with my first course on neuroscience (and after three on CogSci), that I was asked to survey the literature of augmented cognition.

I’ve decided to distill that initial review, and my further studies, across a series of posts on this Substack. I won’t dive too deep today, and specific topics like real-life thinking caps, or the cognitive case for bilingual children, will appear on their own terms as separate posts.

I will also avoid ethical questions, for now—there are some big ones to visit later.

Today’s Menu

What’s Augmented Cognition?

Optimizing learning with cognitive-load monitoring

Next Week

Supercharging problem-solving with TES

Enhancing memory with deep-brain stimulation

Indirect avenues of boosting cognition

What’s Augmented Cognition?

Let’s orient ourselves. I’ll borrow a reasonably broad definition from Cinel et al. (in a fantastic review that informs much of this post):

any improvement over the functionality already available in an individual.

There are many ways to arrive at this ultimate goal, including

Environmental

Electrical

Pharmaceutical

Linguistic

For the moment, at our highest bird’s-eye view we might cluster methods of augmenting cognition into two groups: those which measure the brain and act around it, and those which stimulate the brain, directly or indirectly.

For the electrical engineers among us, this division can be thought of as read/write. To avoid a 3000-word blog post, we’ll cover read methods today and write methods next time.

Monitoring Cognitive Load

Most “read” methods of augmenting cognition involve monitoring cognitive load.

Measurements of cognitive load, as first formulated by Weller in 1988, attempt to quantify a patient’s mental effort during a task or series of tasks. Weller’s initial paper introduced some of the prevailing themes surrounding cognitive load today: first, that it can be measured; second, that it can illuminate how cognition struggles under difficult problems; and, finally, that this knowledge can be used to optimize human learning (and, relatedly, human cognition).

Measurement Methods

There are many features considered important for measuring cognitive load. Some features, like heart-rate variability (HRV), are easily measured (for example, via PPG) but are also lossy and insufficient on their own.

In contrast, functional near-infrared spectroscopy (fNIRS), which measures changes in blood oxygenation in the brain, is a more complex measurement modality, but proves far more informative. EEG can be used as well, and is simpler than fNIRS. However, fNIRS is currently the most widely adopted and successful measurement modality in work on cognitive load, as far as I can tell.

Cognitive-load monitoring appears most often in scenarios where significant cognitive effort is expected, namely strenuous tasks and learning environments. The most common proxy for cognitive load is entirely subjective: self-reporting. This is used often in classrooms, by teachers, to gauge cognitive load during learning, and is used by surgeons during surgery.

Objective alternatives are desirable, though not all end up superior to the subjective default. (HRV, the chubby also-ran of cognitive correlates, performs worse.)

Invasive methods of brain recording also find use in cognitive-load monitoring, but they are far, far less common. fNIRS, perhaps striking a balance between ease of use and fidelity, seems to dominate the space.

Predicting Performance: DARPA’s Warship Commander

With the motivating hypothesis that good cognitive-load measures can predict task performance, it’s no surprise that most studies in the space are coupled to some task environment, often a stressful or demanding one, which is then optimized per the measure under study.

To my knowledge, the first major study of cognitive-load measurement in a task environment (at least, through brain signals—Weller’s measurements were less direct) came from DARPA in 2003, parcel to a series of experiments started in 2001.

The task involved a game called Warship Commander, where the player must identify incoming planes as friendly or enemy, warning and/or destroying enemies using standard rules of engagement. (Fun!) Simulation complexity could be varied with the number and speed of incoming planes.

Researchers in these experiments observed not only that fNIRS measurements of oxygenation change correlated significantly with cognitive load, but also that it correlated fairly well with task performance, shown above (R² = 0.7899). This was hypothesized to result from sustained concentration, which would produce higher oxygenation changes, and which would also (presumably) lead to higher scores.

Optimizing Learning with Brain-in-the-Loop

I mentioned optimization earlier—what’s the point of predicting performance if you can’t use that information? Perhaps the most compelling example of cognitive-load monitoring enabling task optimization comes in the form of Brain-in-the-Loop learning.

Brain-in-the-loop learning (as it appeared in 2013) offered a vision of learning tailored to your personal cognition.

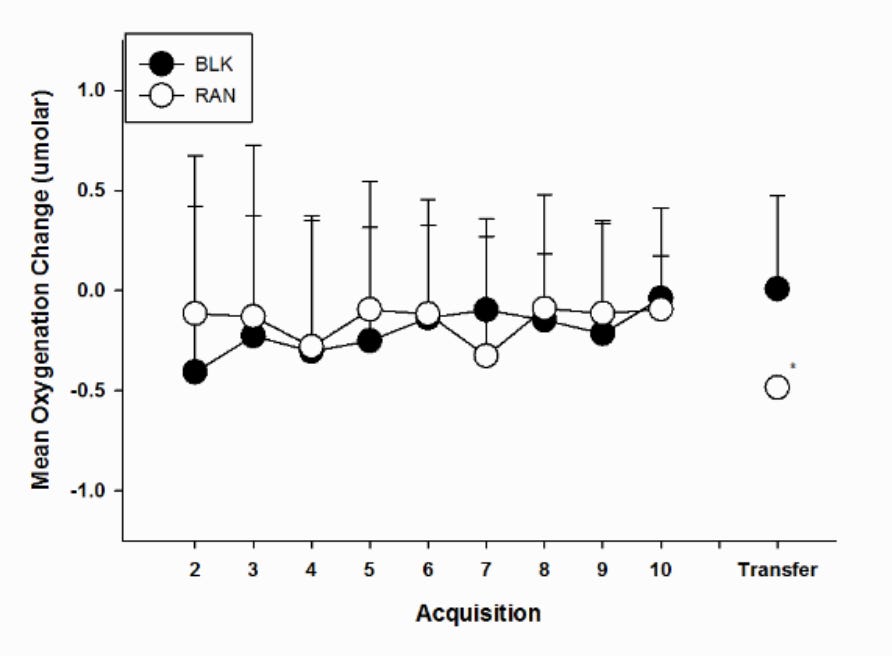

The experiment was simple enough: participants wearing fNIRS caps were trained to navigate three spatial mazes for nine days, before being asked to navigate two novel, more difficult mazes on Day 10. One group—the Blocked group—navigated each of the three mazes once per day. The Randomized group, on the other hand, navigated the same total number of each maze, but distributed randomly across the training period.

Participant oxygenation changes (measured by fNIRS) were fairly similar between the two training groups; but, when asked to navigate the new mazes—in effect, to transfer their learning—the measurements became markedly different.

Oxygenation change was significantly lower for the Randomized group. In a sense, they navigated more efficiently.

Here, fNIRS enabled a quantitative comparison (via cognitive load) of two different learning methods: navigation in a fixed versus random maze order.

Though it may seem simplistic, this opens the door to learning environments that are more fluid, which change to better match the mind. We can easily imagine a more complex, “real-time” version of brain-in-the-loop deployed, for example, to online education, adjusting exercises or problem sets to optimally engage students.

This sort of analysis—using cognitive load to optimize learning—even appeared in Weller’s creation of the concept, where measures of cognitive load revealed a tension between “conventional problem-solving activity” and “schema acquisition” (the internalization of domain knowledge).

With the maturation (and miniaturization) of fNIRS, it feels entirely plausible, and maybe preferable, that the future of education will be form-fitted to the electrochemical dynamics of the mind.

That’s where I’ll leave off for today.

If you’re interested in learning more with me, you can keep in-step with the button below. We haven’t even gotten to thinking caps!

A note on the thumbnail: I don’t own it (obviously). It’s from NIRX, an fNIRS hardware manufacturer. Those are NIRX headsets—check them out if you’re in the market, I guess. (Or, if you’re more of a 3D-printing fan, check out OpenFNIRS.)